Pi-hole, MetalLB, Layer 2 and all that kind of fun stuff

Posted on March 11, 2019

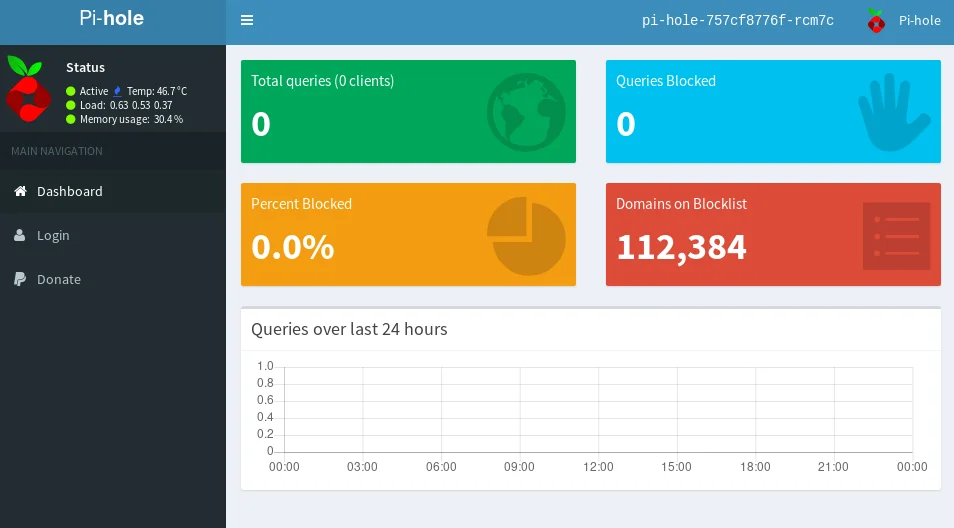

If I was to recommend one service for friends to run at home, it would be pi-hole. For those that might not be familiar, pi-hole is a network wide adblocker. Pi-hole blocks ads and telemetry services by acting as a DNS sinkhole for unwanted domains. It is very simple to get up and running so if you have Raspberry Pi lying around gathering dust - INSTALL PI-HOLE. It's well worth it!

I've been running pi-hole for the last couple of years on an old single core Raspberry Pi Model B+ and thats really where this post came from. A lot of the services that I run at home rely on the pi-hole DNS server so if the SD card or the board were to fail it would be a bit of a disaster. So the aim was to migrate the pi-hole install from the old board to a Kubernetes cluster made up of a few Raspberry Pi 3B+s (so yeah if you haven't figured it out yet, I like Raspberry Pis....). The new pi-hole service will require some shared storage in order to no longer rely on the SD cards. DNS clients will expect the server to be available over port 53 so the service will have to be exposed through some external IP

Theres probably no need for me to go through some of the initial setup as there are plenty of guides out there on how to get Pi clusters spun up and installing helm.

As you can probably tell from the name, MetalLB is a load-balancer for bare metal Kubernetes clusters which allows users to take advantage of the service type loadbalancer within Kubernetes. This is what we are going to use to expose pi-hole to the DNS clients on the network. You can either configure MetalLB in layer 2 mode or in BGP mode. Layer 2 mode is the simpler of the two and this is the one that I went with for my home network. The helm install of MetalLB requires either some inline config in the values.yaml file or a pre-existing ConfigMap. Layer 2 mode requires an address pool that it can use to give out addresses to different services of type loadbalancer. This address pool should be in the same subnet as your DNS clients but make sure that the range of addresses are not managed by a DHCP server. Here is an example of a configmap for a network 192.168.2.0/24:

apiVersion: v1

kind: ConfigMap

metadata:

name: metallb-config

data:

config: |

address-pools:

- name: my-ip-space

protocol: layer2

addresses:

- 192.168.2.240-192.168.2.250

kubectl create -f config.yamlOnce the ConfigMap has been applied in the cluster, you can helm install MetalLB:

helm install --name metallb stable/metallbThere is a handy nginx example in the MetalLB docs that helps you verify that everything is working properly. I hit a little stumbling block at this stage as my nginx server wasn't available on its external IP from any of my machines that weren't part of the cluster - No route to host. It was available from inside the cluster but the IP was not being advertised to the rest of my network. After a bit of digging, I discovered that this issue was caused by a couple of my Pis that were connected to the network over WiFi and not Ethernet. The wlan0 interface had to be set to promiscuous mode before the nginx service could be reached over the external IP - https://github.com/google/metallb/issues/284

sudo ifconfig wlan0 promiscUnfortunately you won't find pi-hole in the stable helm repository with MetalLB. There are a number of charts available on GitHub but here is the one that I found most useful: https://github.com/mechinn/pi-hole-helm

This chart allows you to expose the pi-hole service over ports 80, 53 and 67 (if you want DHCP) on a load-balancer IP from MetalLB. All that you need to do is clone down the repository and update the values.yaml to reflect what you want in your environment

helm install --name pi-hole ./pi-hole-helmTo get the external IP address of the pi-hole service, you can run:

kubectl get svcThe pi-hole dashboard should be available at http://EXTERNAL_IP/admin

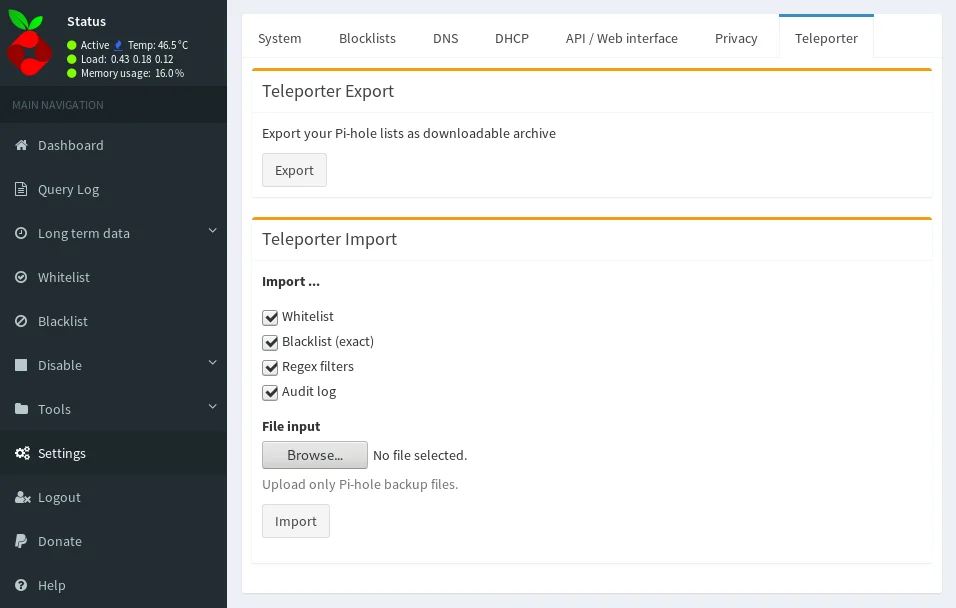

The Teleporter export and import make the migration very easy as these will bring over your Whitelist, Blacklist and regex filters over to the new pi-hole for you.

I have migrated most of my machines over to this new pi-hole instance however I am keeping the old physical pi-hole as a secondary DNS server until I can verify how reliable the new setup is. I know that I skimmed through most of this so if theres any questions don't hesitate to send me a tweet.